The immensely powerful aggregation website Metacritic just got a little more powerful, partnering up with Amazon, the biggest online retailer in the world, to display Metascores on video game pages.

This appears to be a quiet launch — rolling out gradually over the course of the week — but that Metascore is anything but quiet. It’s right in your face, and it will likely have a significant impact on Amazon’s game sales.

That’s bad news for anyone who cares about video games, for a number of reasons:

1) Metacritic’s system is faulty. I’ve written extensively about the problems with Metacritic — how their scores remove nuance and ambiguity; how game publishers have influenced and tampered with scores; how Metascores affect which game studios stay afloat; how Metacritic culture has actively impacted the way some developers make games. Check out my full report from last year to read just how Metacritic affects the video game industry. It’s not comforting.

2) Games change; Metascores don’t. No matter how many times a game is patched or tweaked or improved in any way, that big ol’ number won’t change. Let’s say reviewers give something a low score because of bugs, and over the next few months, the developers squash all those issues in subsequent patches. The Metascore will stay the same. “Metacritic scores really are that snapshot in time when a game is released, or close to after it’s released,” Metacritic boss Marc Doyle told me last year.

That might be a helpful number when a game first comes out, but for older games, Metascores aren’t just obsolete — they can be actively misleading. Maybe Amazon should warn readers that Metascores represent reviews as they were when the game was released?

3) Polarising games are treated as “average.”

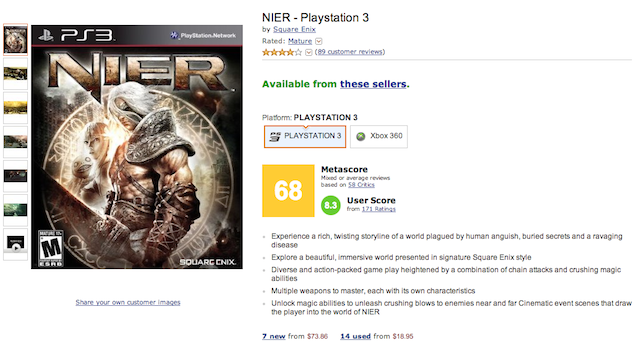

Look at Nier, an action-RPG with a 68 on Metacritic:

That big yellow is supposed to mean “average,” but really, despite the collection of 7/10s, it’s hard to find people who look at Nier as an “average” game. Nier is polarising. People either love it or hate it. Saddling the game with a 68 — a bad score, by most accounts — does a disservice to people who might love the weirdness of a game like this, or many other bizarre titles that sit in the 60s and 70s on Metacritic.

4) Score aggregation poisons discussions and invites unfair comparisons.

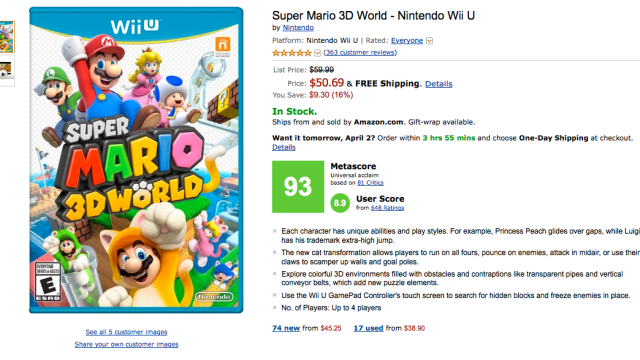

While it is impossible to compare, say, Super Mario 3D World to The Last of Us, Metacritic invites us to do just that. Mario has a 93; The Last of Us has a 95. By Metacritic’s — and now Amazon’s — definition, The Last of Us is two points better than Super Mario 3D World, even though one game is a cartoon platformer and the other is a cinematic zombie adventure game. Trying to quantify a video game’s quality encourages absurd conversations and comparisons, and teaches readers to focus on the wrong things . It’s discouraging to see Amazon participate in that culture.

As the critic and games writer Tom Bissell once told me in an email: “Metacritic encourages the fallacy that all opinions should be weighted equally, and that a ‘bad’ review is an unenthusiastic review. But that’s not true. There are some games I am *more* likely to play when a certain critic gives them what Metacritic regards as a ‘bad’ review. Metacritic leaves no room to discuss, much less pursue, guilty-pleasure games, noble failure games, or divisive games. Everything’s just a 7, or an 8, or a 6.5. That’s the least interesting conversation I can imagine.”

5) Review scores mean different things to different people.

Here’s how the gaming website Polygon describes a 7/10:

Sevens are good games that may even have some great parts, but they also have some big “buts.” They often don’t do much with their concepts, or they have interesting concepts but don’t do much with their mechanics. They can be recommended with several caveats.

And here’s how the magazine Game Informer describes a 7/10:

Average. The game’s features may work, but are nothing that even casual players haven’t seen before. A decent game from beginning to end.

Those are two drastically different ways to define the same score, which renders the two numbers meaningless when averaged or stacked up against one another. Polygon‘s 7 is different than Game Informer‘s 7. Yet review roundups and aggregation websites like Metacritic don’t take that into account. How can you trust an average when everyone’s working on a different scale?

I don’t think there are ill intentions here. Amazon is likely embracing Metacritic as a way to serve their users — after all, these scores are designed to help people sort out what’s worth their time and money. But the consequences — video game publishers and developers working even harder not to experiment or make games better but to improve their Metascores — could be really bad news.

Comments

35 responses to “Amazon Adding Metacritic Scores Is Bad News For Everyone”

An average game on metacritic is an 85. And if you look deeper at the reviews: “9/10 a great game *lists 20 points which make the game terrible* but yeah good game”

Haha! and if you thought people low-grade bombed Metacritic before…

Correct me if I’m wrong, but Steam already includes Metacritic scores on its store page, so isn’t Amazon just delivering what the largest digital games store already does?

Yep, was going to point this same thing out. It’s just a display, you can’t add new reviews without going through to Metacritic directly. Same as Steam.

I thought that too but now I’m looking for it I can’t seem to find it on any of the Steam store pages.

[Edit: Ok. I found it on some pages but not others. I will say Steam are a bit more subtle about it and more importantly just because Steam does something doesn’t make it a good idea. =P]

I don’t look at metacritic at all, I use the users reviews on steam with the thumb up or down system. There is always great reviews and perspectives on their written by gamers that tell you exactly what you want to know.

I think Steam only highlights it for high scoring games..

Positioning would be probably the biggest difference.

Steam puts it in the sidebar, beneath things like whether the game has Cloud Saving or Controller Support. It’s also had almost all the styling stripped from it; all there is is a relatively small number (Which is also always the same colour) and a small Metacritic logo. On some pages it’s actually rather hard to find.

On the Amazon pages it’s front and center and it has its own dedicated colourful box. The only things given higher priority in the listing are the Title, Price Shipping Information and Platform.

I don’t use Steam often (not a PC gamer), but I think the reason this needs to be pointed out is because Amazon caters to a larger audience than just gamers. On Steam, most of the audience is likely informed about games and the Metacritic score probably influences purchases less whereas if you look at Amazon, where maybe a mother would go online and buy games for their kids, the score could be a bigger factor in the purchase.

I never use or read Metacritic, it is the North Korea of gaming reviews

It’s useful as a compilation source for other reviews, since you can click through to read the full reviews for each source. Just ignore both scores, and for the love of games ignore the user reviews, most of them are written by people who have never played the game.

I get that, but the professional and public bias is enough to keep me away. I have gone off many review sites due to how unreliable they have become.

There are very few places I go to get reviews anymore. I have taken a liking to Angry Joe actually, love or hate the game he can still compile a good list of pros and cons objectively.

I like Angry Joe, though I feel like he really misses the mark sometimes. Same with TotalBiscuit, but Angry Joe’s fans tend to latch on to things he says a bit more aggressively than TB’s fans =)

I find it’s still a good quick indicator. Green is “you’ll probably like this”, yellow is “you might like this”, red is “you probably won’t like this”. In all cases I check the reviews to see what the deal is. It’s just nice when you’re browsing games that there are links to a centralised source that will always have information about user’s experiences.

Not sure why this is bad? It’s just a guide (like any review system) for people to gauge whether to spend money on a game or not.

Also, it’s good the scores don’t change, games shouldn’t be released if they are a buggy mess, and just because you decide to ‘fix’ your game down the track doesn’t mean you should get a better score. How about not releasing a POS in the first place.

Just checked out amazon but I see no Metascore appearing anywhere.

I only read the User Score and read the comments rather than reading the paid to write review which gives the final metascore. I find it rather pointless to read big company reviews since they have to maintain their relationship with publishers to not bash the game if the game is too bad and give praises to the most repetitive game (CoD).

User reviews are worse. If the game has one controversial feature, guaranteed it’ll be flooded with thousands of zero scores from people who didn’t even play the game just because they want to trash the rating because of that one thing they don’t like, even if the rest of the game is actually pretty good.

I’d say if you don’t like the critic score, ditch Metacritic altogether and just go with reviewers you trust. I like Jim Sterling, Angry Joe and TotalBiscuit myself.

Totally understand where are you coming from but I ignore those troll reviews. There are quite a few nice and constructive review by user and those are the one that matters. I just want to know how another person that is a fan of the genre or series think of the game and usually it helps me alot.

None of these things would be an issue if it was made clear what Metacritic is and how it works.

Of course that won’t be happening.

OTOH Amazon’s own scores are broken. E.g. a 10/10 product will get 0/10 from some numpty because their particular copy was defective, or because Amazon stuffed up shipping.

How is this going to be any worse than the giant clusterfrak that is Amazon’s in-house review system where people can give products one star because it was delivered a day late, or 5 stars to a product that hasn’t been released or they don’t own? You can’t even tell for sure whether you’re looking at the review for the version of your product released previously or the current one, because they collect all reviews for that title regardless of format or version. They really should get around to fixing their own review system, but a flawed aggregate of critic reviews is a better metric than amazon users.

At least for some games that contain massive game breaking bugs that make them unplayable it may give the developers some incentive to not troubleshoot live, or the reviewers should do some due diligence and if they believe that the bug may be fixed at a future time they will wait and then submit it to Metacritic.

I hate to say this, but you’re talking crap. The games already have Amazon’s internal ratings and reviews and they’ll be subject to the same faults.

This isn’t the fault of Metacritic or reviewers. They are calling a spade a spade.

Sounds to me like game developers/publishers need to spend more time killing bugs before release and be more careful.

Way too many games these days are put to market with a lot of bad bugs in them, with the attitude of “oh we’ll fix them in a patch”. This attitude needs to change. It wasn’t all that long ago that console games could not be patched and developers needed to make sure they got it right before going to market, but now that consoles have internet connectivity all of a sudden it’s fine to release a buggy game and patch it later.

If you release a buggy game to market, don’t be surprised by your review scores. Reviewers review a game on release, not 6 months later after you have ironed the bad bugs out.

Yeah – if you want a good metacritic score, release a quality product. Enough of this ‘we’ll fix it later’ attitude.

How is 68 an average game? I would have thought “average” would have been about 47 – 53. What is basically a 7/10 is in now way an “average” game.

now it seems 50% is unplayable crap, 70ish is average, 80-90 is good.

When it really shouldn’t be – 5/10 should be half of what a perfect game is – therefore average.

There’s a few reasons for it, but most games tend to be reviewed on the 7-10 scale as it’s sometimes referred to.

The idea comes because the mid ground, a 5 tends to be seen as a real mediocre rather than average game. It’s something so uninspired and banal but without anything really wrong.

Below 5 is generally reserved for broken messes that shouldn’t have been released at all and that right there is the crux. By definition, there shouldn’t be any games being released that are this bad so of course there are much fewer examples of those.

Take a look at the two examples of criteria for 7s in the article, quite different but there’s the implication that they’re working products and are decent. Which most games released actually are.

Basically because they’re a technical product in many ways, they get points simple for working and those last few points on the scale start to become the subjective opinion portion of the review.

Which has the negative effect that people like me now see 7 as a baseline, less than that means something went wrong or more means something interesting is there.

I did not know taht about reviewing.I still think 5 should be “it works” and anything above that subjective, but that’s just my opinion 😛

I agree, it makes a lot of sense but like many traditional things it is a bit counter-intuitive.

Movie and other reviews are often like that, usually without regard to technical strengths. At least traditionally, with an average movie being scored 2-3 out of 5.

But it’s rather ingrained in the culture now, not sure how you’d go about changing it… That said, I do appreciate the idea of scoreless reviews which work much better for subjective content without inaccurate quantifying.

The User Score is a far better indicator anyway. In an age where reviewers constantly give out questionably inflated review scores for games, the User Score gives a way more realistic expectation of how good a game will be. Sure, there are people who will rate a 1/10 on a game because it pushes some agenda they don’t agree with, but they’re in the vast, vast minority.

Look at the Mass Effect series on PC for example:

ME1 – Critic Score: 89/100 – User Score: 8.6/10

ME2 – Critic Score: 94/100 – User Score: 8.7/10

ME2 – Critic Score: 88/100 – User Score: 5.1/10

ME1 and ME2 were both great games but ME3 really dropped the ball with the “pick a colour” ending that disregards everything else you did in the series, and I can see why points would be knocked off. 5/10 means it’s an average game. It’s not great but it’s not terrible.

Dragon Age series on PC:

DA:O – Critic Score: 91/100 – User Score: 8.5/10

DA2 – Critic Score: 82/100 – User Score: 4.3/10

DA:O is widely seen as a good, solid game. DA2 was widely seen as inferior game, and an average one overall at most.

Thief-

Critic Score: 70/100 – User Score: 5.7/10

It’s fair to say Thief was painfully average and the User Score reflects that.

The Critic Score system sucks, but the User Score- that’s what you want to look for.

User score is the worst, there are so many games with crappy user scores that are good games, its all about perspective though.

for me thief was an amazing game, and I think it deserves at least the 70 it got if not more.

plus user scores are always skewed by people putting in reviews with 0/10 from people who have never even played it.

Which kind of also disproves how good it can work. Rating the entire Mass Effect 3 game on the ending? That’s so lame.

Who needs numbers? Watch a Youtube gameplay video and decide for yourself.

If you cant give a definitive reason for each point of the total score then its a terrible way to conduct any form of review. (Eg in a math test you get 1 mark for a correct answer). This is impossible to do in any form of media review and at the end your left with an arbitrary scored based on opinion rather then actual merit. The better way I think, is to list the pro and cons so readers can make a informed choice on whether the game is for them or not.