Intel gave a presentation at GDC a few weeks back, but I’m guessing nobody actually watched it because it took until this week for anyone to notice this absolutely absurd pitch from the company, which wants to use AI to monitor and censor “hate speech” in your online voice chat, and let users toggle just how much hate they want to hear.

It’s a service launching later this year called Bleep, which “is a user-facing application that uses AI to detect and redact audio based on user preferences”. Which basically means it will monitor audio as it comes out of your system, and mute/beep your speakers or headphones when it detects bad words.

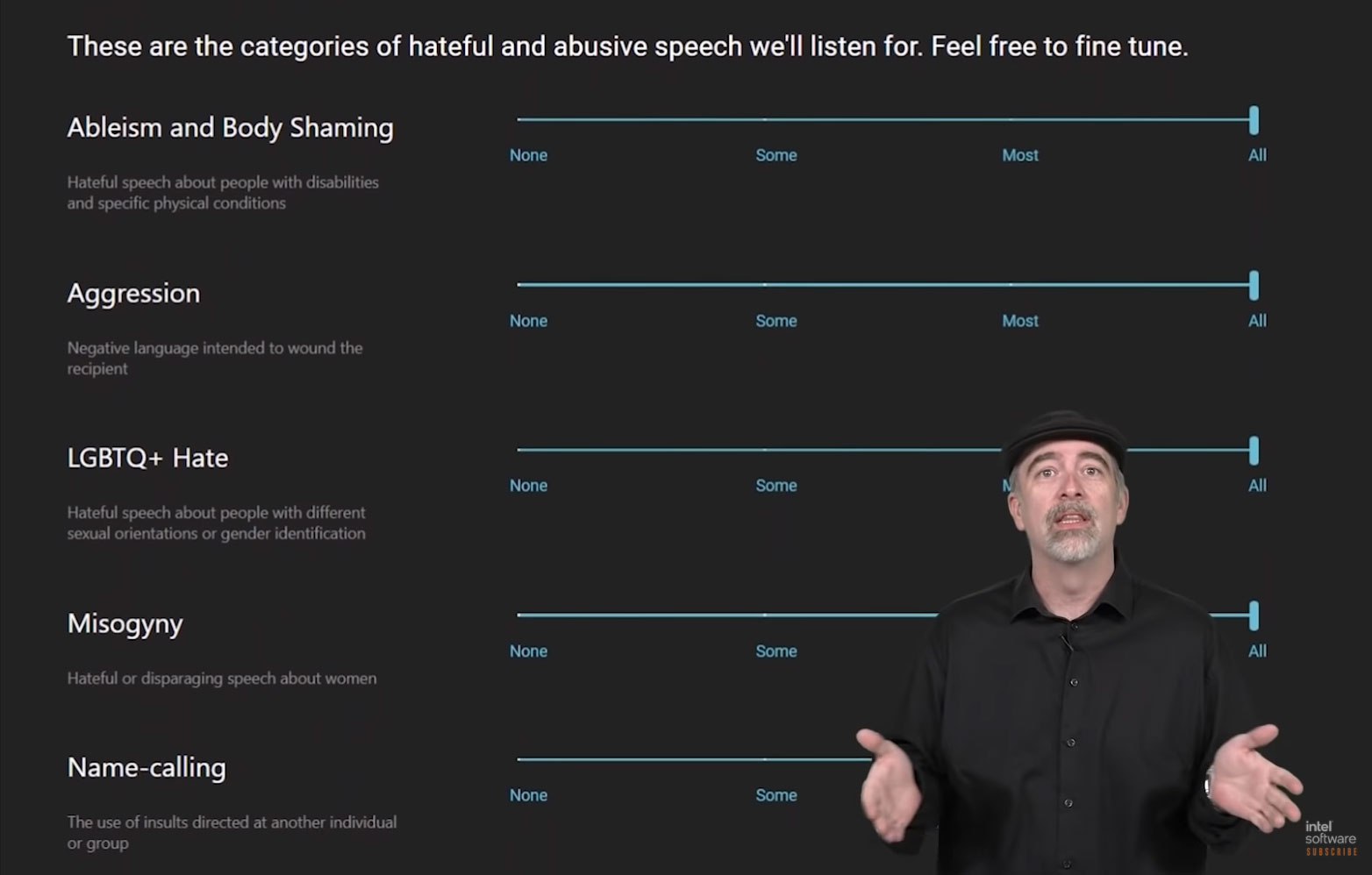

It’ll detect those words using AI, but it’s the user preferences part of this that is the most hilarious/horrifying. Here’s a look at Bleep’s backend settings, and it is a technological hellscape.

Feel free to fine tune! Would you like to hear people body-shaming you? Oh, you would? But only a little? OK, sure!

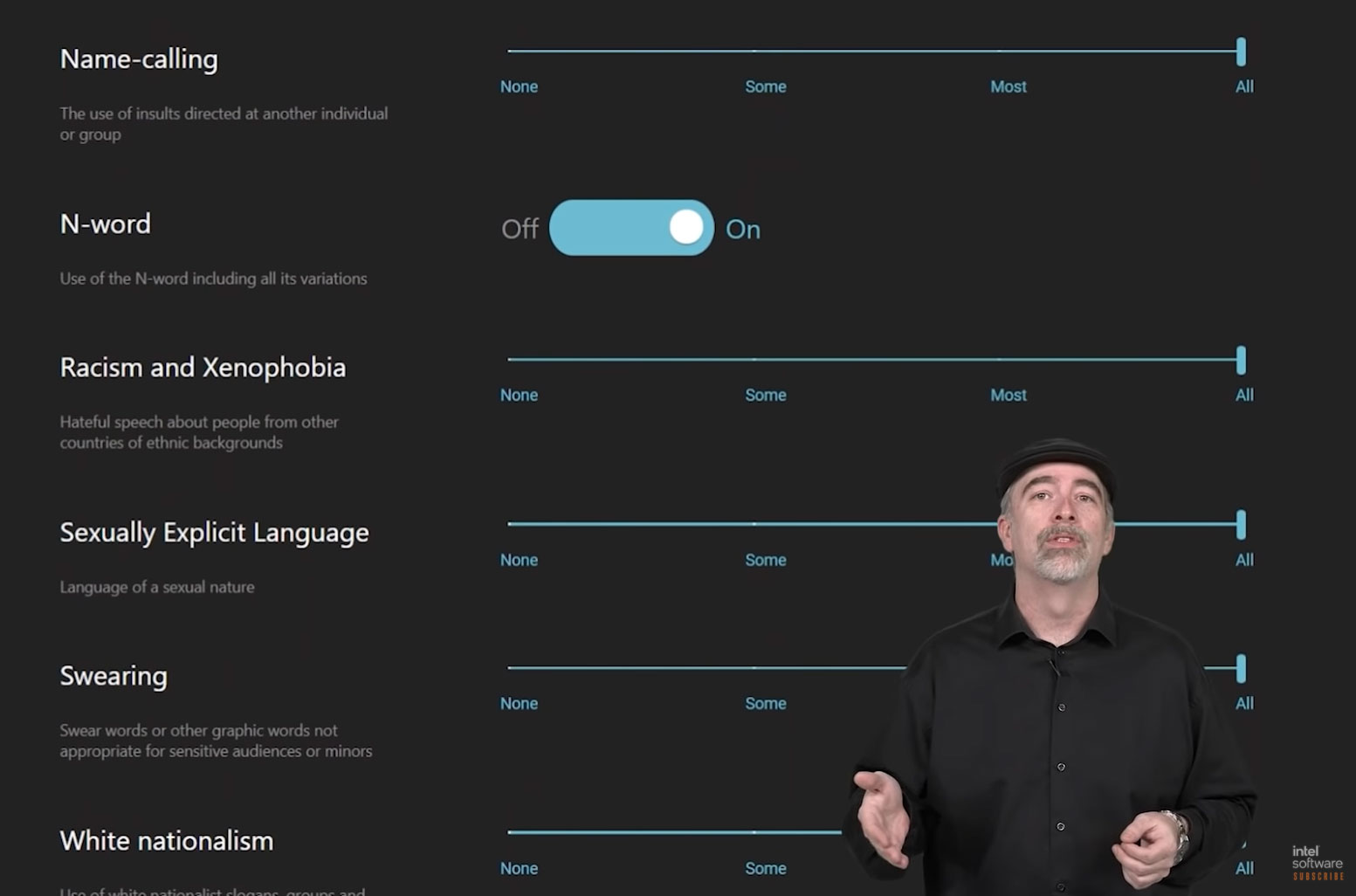

So many choices! I deeply appreciate the fact I can only hear someone screaming white nationalist taunts down a microphone “most” of the time — sometimes you need a break, after all! — and will be thinking very hard about whether I want to toggle that N-word switch to its “on” or “off” position.

It’s ghastly that something like this ever left a whiteboard, let alone made it all the way into a major presentation, but then we’re years past the point where we should be expecting companies like Intel to think about anything except ways it can waste millions trying to use its own technology to combat deeply human problems.

Maybe there was a good intention here at some point. Letting people enjoy a safer online experience is, after all, a very good thing! But this, this is not the way to do it. Hateful speech is something that needs to be educated and fought, not toggled on a settings screen.

You can watch the presentation at around the 29:30 mark in the video below (though it should autoplay at that point if you click on it anyway).

Leave a Reply