After months of speculation and anticipation, Nvidia’s latest piece of hardware you put in your computer to make things look pretty is here. The GeForce GTX 1080 is much better than the pieces of hardware you put in your computer to make things look pretty that came before it.

Which is great, because introducing a new flagship product that performed worse than the previous one would be hilarious, but also very sad. The GTX 1080 is neither hilarious nor sad (box quotable moment alert).

Technology-wise the GTX 1080 is a major leap forward for Nvidia. The company’s been using a 28 nanometre (nm) fabrication process (fab) for its GPUs since 2012, spanning two generations of architecture (Kepler and Maxwell). A drop to 20nm was planned and then scrapped, and now gaming-grade Pascal arrives in 16nm size. The smaller fab size coupled with the use of FinFET 3D transistor technology makes for a smaller, more densely-packed GPU that runs cooler and is much more energy efficient.

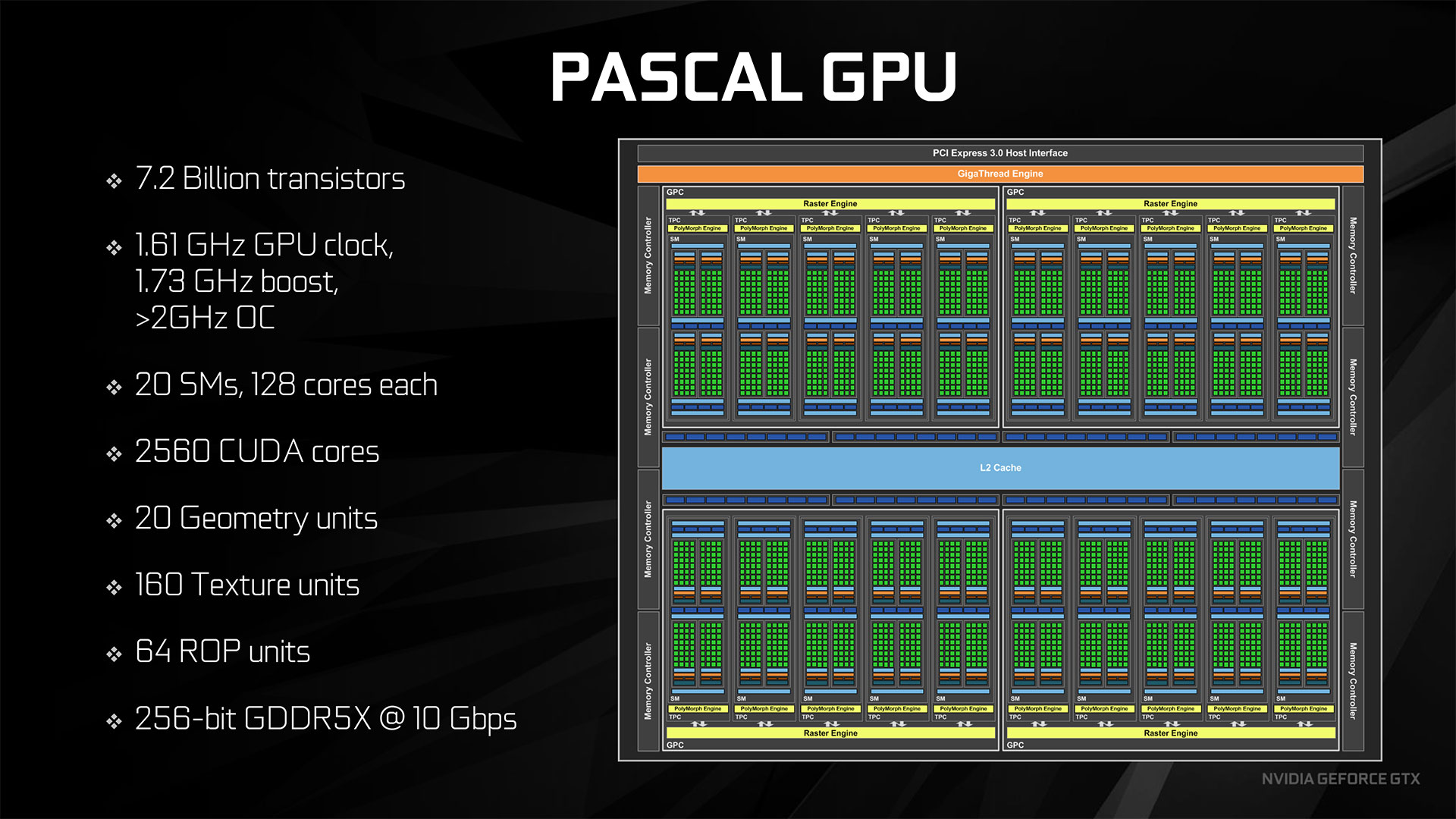

The GTX Titan X, Nvidia’s former flagship graphics card, featured 8 billion transistors packed onto a 601 square millimetre die. The GTX 1080 packs 7.2 billion transistors in a 314 square millimetre die.

Numbers are very important when determining if you should buy a new graphics card. The 1080 has many numbers.

In layman’s terms, the Geforce GTX 1080 is fast. The base clock speed of 1.61 GHz that boosts to 1.73 GHz (and beyond with overclocking) is much higher than anything Nvidia’s put out before. Coupled with 8 gigs of GDDR5x memory running at 10 gigabits per second (not the second generation High Bandwidth Memory that was speculated, but still quite nice), the card is a demon on wheels, only without the wheels. It compresses better. It renders better. It smells slightly better. It even has a better introduction video.

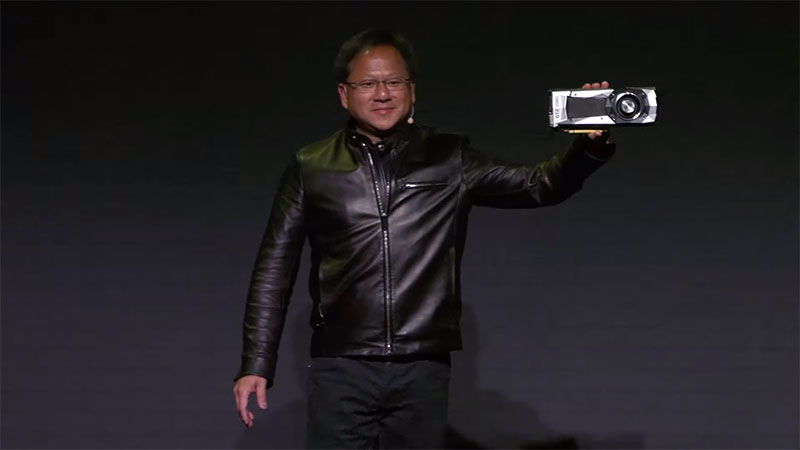

As well it should be. Presenting the card on stage at a special event earlier this month, Nvidia CEO Jen-Hsun Huang revealed that the Pascal architecture behind the 1080 GTX has been in the works for several years, with a research and development budget in the billions. No company spends that much time and money to hold up a product on stage that sucks.

Nothing is real until Nvidia CEO Jen-Hsun Huang holds it up on stage.

If you want incredibly detailed specs on the Geforce GTX 1080, Nvidia has an entire page filled with them. Rest assured that the card is packed with all the technology a growing computer needs and several bits that most don’t even need to worry about yet.

For example, do you need a graphics card capable of handling 4K HDR video content? How about simultaneous multi-projection, which takes geometry data and processes it through as many as 16 different projections from a single viewpoint? It will be nice for multi-monitor setups and boost virtual reality performance by up to two times, but it will have to wait until more applications support it. Still, nice to have it there.

On the opposite end of the spectrum we have the new Fast Sync technology, a boon for players of less demanding older games, where all of this extra power isn’t needed. It basically lets the GPU render as many frames per second as it pleases, with the hardware picking and choosing which frames to drop to keep things running smoothly. This allows players of games like Counter-Strike to avoid the latency spike of vsync without the tearing that comes from running without it.

But enough about other people’s potential experience with the Geforce GTX 1080. Let’s talk about mine.

Nvidia sent along the Founders Edition of the 1080 GTX. “Founders Edition” is a fancy way of saying reference card, the basic unit crafted by Nvidia, as opposed to the fancy, fan-festooned, overclocked monsters that third parties like EVGA and PNY will be bringing to the market. Unlike past basic boards, however, in the US the Founders Edition of the 1080 GTX is priced at $US699 ($968), $US100 ($138) over the suggested retail price of the card. Meanwhile, Australian pricing has not yet been confirmed, but we should know by the end of this week.

Wha? How? Well, the idea is the Founders Edition of the card is a premium quality card, machined exquisitely and cooled via fans and vapour chamber to perfection. It’s capable of being overclocked (I’ve gotten it up to 2GHz fiddling about with the EVGA Precision tool provided to reviewers), but it won’t ship that way.

It doesn’t really make much sense to me as a consumer, but I hear boutique PC manufacturers love the idea of a basic Nvidia-produced card available for the lifespan of the product.

Installation was simple. Unplug my old 980, throw it in the trash, set the trash can on fire, plug in the 1080, affix the single 8-pin power connector that provides all the juice this thing needs (only 180 watts), install the driver and then proceed to play many different games for minutes at a time.

But first, a trip to Unigine’s Heaven Benchmark.

Apparently I need to spend several hundred dollars to upgrade to the advanced edition.

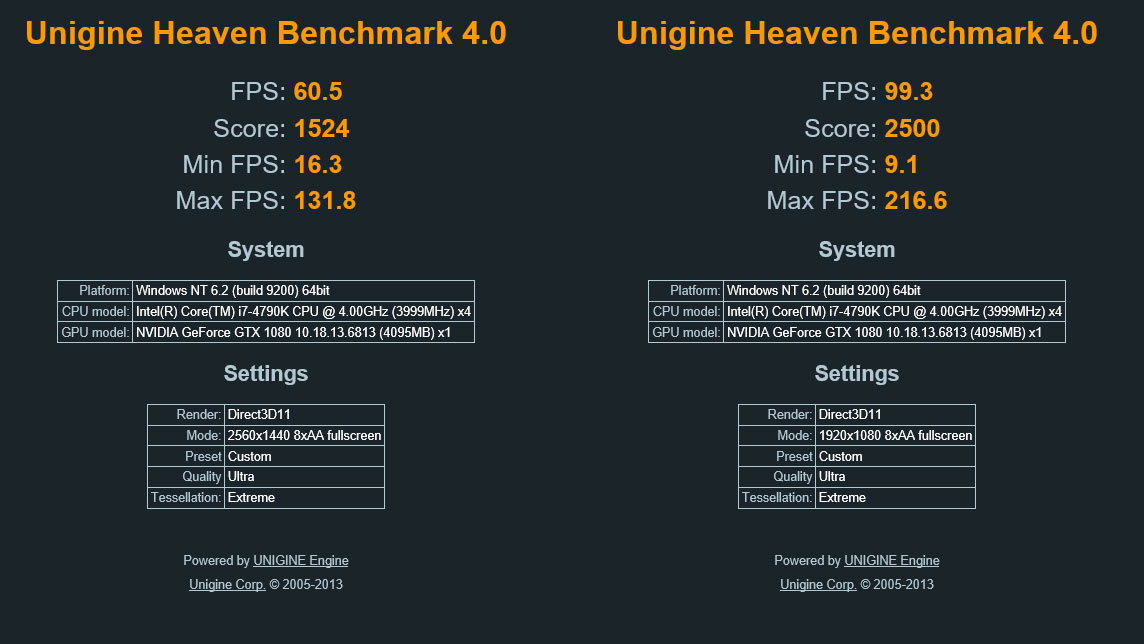

I used Heaven because it is pretty and the music that plays while the benchmark runs provides a lovely backdrop to whatever I am playing on my phone while I wait for it to finish. I ran the benchmark in both 1920×1080 and 2560×1440 resolutions. Note that I had a ton of other stuff running at the same time, which probably skewed the results a little lower, but my results are within a few points of others I’ve seen, so I trust them.

That’s the last time you’ll see 1920×1080 FPS numbers in this review. The Geforce GTX 1080 can run anything out there at standard HD resolution at 60 frames per second or higher. If you’ve no ambitions beyond 1080p, be it multiple monitors, something ultra HD or even a super-wide, then this is more card than you need right now.

Which means I got to hook up the old Philips 4K monitor for this review. I’d set it aside because I didn’t have a video card (or cards, really) that could make proper use of it without sacrificing quality, and what’s the point of going 4K if you’re going to have turn turn down the settings?

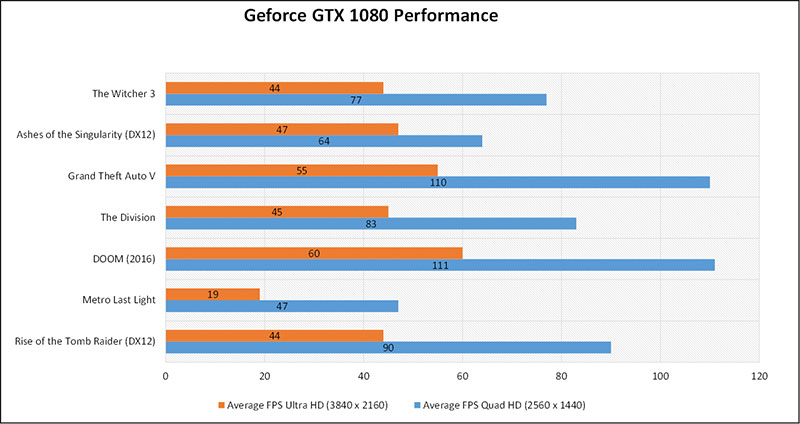

The better question here is does the GTX 1080 justify this 2160p monitor? It’s time for some numbers. The games in the chart below were taken at the highest settings possible. The data was then entered into Excel, formatted as a chart, pasted into PowerPoint and then saved as an image. Here is that image.

Numbers! People looking for a new graphics card love them, and here they are, speaking volumes about the performance of Nvidia’s latest. What do these particular numbers tell us?

For one, Metro Last Light continues to be a massive dick to graphics cards. At the resolution I said I wouldn’t mention again earlier, Metro Last Light got a frankly staggering 86 frames per second on average. Then it gave the GTX 1080 the finger. If you want to run that game at 4K resolution, check back in another couple of years.

As for the rest, not too shabby, GTX 1080. Each of the games I played and tested at least exceeded the “playable” average of 30 FPS at Ultra HD. Comparatively, I wouldn’t even bother trying with my old GTX 980, which Nvidia loves to compare its latest card to.

I prefer a silky smooth 60 frames per second myself, but it looks like the sacrifices required to get there at 4K wouldn’t be too great, and there’s always that happy medium, Quad HD.

If you’d like to see how the GTX fares against the graphics cards that came before it, along with power efficiency, temperature stats and overclocking stats, check out the review from our pals over at Techspot, who obviously have a multi-million dollar lab environment to work with. I’m just a man sitting in front of a tower case, asking its GPU to love him.

Love me.

The Geforce GTX 1080 loves me a great deal, and I love it back. The numbers are good, but I’m more excited about what’s coming down the line. Simultaneous multi-projection is going to work wonders for VR performance once the software starts supporting it, and I can’t wait for a Vulkan API version of Doom to come along so I can play at a completely ridiculous 200 frames per second.

But that’s then. What about now? Should you line up outside Nvidia’s corporate offices on May 27 to purchase a Founders Edition Geforce GTX 1080? They probably wouldn’t be selling it there, so that’s a no. And even if they were, I’m curious to see what kind of performance the third parties squeeze out of this beast. If there’s a chance an ASUS or MSI could release a version that kicks the reference card’s arse at a similar price point, waiting would be worth it.

Speaking of the Geforce GTX 1080 in general, it’s definitely the next big thing in graphics cards (until the next one comes along). It’s a bit more than a 1080p stickler needs, but those with bigger and better display needs are going to want one or two of these.

Comments

39 responses to “NVIDIA Geforce GTX 1080 Review: Time For An Upgrade”

Bit of an insane local price though no? Even ordering from the US and getting it shipped here would save you a few hundred dollars.

there is still no official Aussie price, of course the importers are trying to rip us off.

Afaik the official Aussie RRP will be released ‘by 29 May’

Ah ok. Was just going off of the insane price mentioned in a Kotaku article last week. Waiting on the 1070 myself.

Yeah, I think one or some of the articles have been redacted to clarify all pricing so far is speculative or via re-sellers who are marking the price up.

Oh man can’t wait for this. My SLI 680 GTX’s are ready to be replaced.

*turned into dedicated PhysX cards 😉

Really? You can do that?

Yeah, you can dedicate an nVidia GPU to PhysX. If it’s a grunty but dated one, it’s perfect as it doesn’t support all the latest “make it pretty” cleverness but still has raw power. It makes a surprising difference even with a relatively low-spec GPU. Check this, even with a monster SLI setup, offloading PhysX to another GPU gives a good boost.:

http://www.volnapc.com/all-posts/how-much-difference-does-a-dedicated-physx-card-make

I’m so happy, because I learnt about this from a throwaway comment on Kotaku, and now I’ve shared it with a throwaway comment on Kotaku…

Can you dedicate multiple cards to PhysX or are you only able to dedicate 1? (*has 580s in SLI*)

That I do not know. I have a single 680. It will either go into PhysX, or it will go into my I7 Shuttle PC. Or I’ll try a firmware change (if it has the right flash ROM on board) to make it a Mac card and it can go in my Mac Pro…

According to this person, a 580 made a difference with a 980 primary. http://www.overclockers.com/forums/showthread.php/753283-EVGA-GTX-980-and-use-GTX-580-for-PhysX

I think you can only use one for physx as the nvidia control panel only lets you specify a GPU. CPU, or Auto. Multiple GPU’s will just show up as individual items in the list.

Figured that would be the case. Ta. 🙂

It’d be great if it wasn’t limited to just PhysX, and could have all sorts of other computational stuff offloaded to it.

Anything using cuda will also work, video rendering etc.

Cuda is still Nvidia-specific though isn’t it? As opposed to something done with OpenCL?

Just wondering whether the hardware-agnostic stuff could still be specifically directed to a secondary card while the primary handles all the graphical stuff in the same manner as this, at least without the program writer having to specifically code for it in the first place.

From nvidia:

A new configuration that’s now possible with PhysX is 2 non-matched (heterogeneous) GPUs. In this configuration, one GPU renders graphics (typically the more powerful GPU) while the second GPU is completely dedicated to PhysX. By offloading PhysX to a dedicated GPU, users will experience smoother gaming.

You can allocate a single card to solely perform PhysX computations, and you would be surprised how much of a difference it can make in performance with games that fully support it.

It’s been that way since the inroduction of the 200 series I believe. I used to run a 580gtx and a 285 dedicated to physx for a while. Was nice, but not enough games made use of it back then. Came in handy when running material simulations though.

The prices on these things are insane. ‘Time for an upgrade’ my arse.

Australian price isnt official yet. Going off the launch US price these are very affordable considering the power. If you already own say a 980 I would hold off a bit, but for owners of a 780 myself the time has certainly come.

Going from a single 680 myself so even the 1070 should be a rather huge jump for me.

I don’t see myself grabbing one until well into 2017/early 2018.

I just upgraded everything for this exact purpose, to be just below par of what will be (gold) standard, but still economical and sound for what I want to use my PC for.

The ‘event games’ with some exceptions won’t be able to fully appreciate what this card can do because they will also have to be released on the consoles (static hardware) and now-previous gens anyway. Albatross around the neck. Until such time third parties (developers and otherwise, like Mr Fahey says) are able to focus wholly and solely on this cutting edge generation, and cut aside the older technology, I’ll be happy with what I’ve got.

This is the major milestone as well isn’t it? We won’t see substantial improvements for a while now, the focus will be on shrinking and refining this for consumer-brands (ie VR, laptops, even Apple gear) for the forseeable future?

In the mean time, the price reductions on the not-bleeding-edge-anymore gear will probably encourage people like myself to experiment with DIY PC builds even more.

I’d consider the 1080Ti the major milestone. It runs the full-featured GP100 chipset and HBM2 as opposed to the GP104 with GDDR5X. I expect you’re right that the 11 series would be a smaller bump in comparison.

If a 1080ti comes there’s no way it’s going to be based on the GP100 chip, that’s for Tesla cards only. The GP100 has a 1:2 ratio of double to single precision performance, while the GP104 chip in the 1080 has a 1:32 ratio. It’s pretty clear Nvidia are making separate GPUs for the Geforce and Tesla lines now.

All of the rumours are pointing towards a future Titan and 1080ti being based on a GP102 chip, which will omit the extra double precision hardware just like the GP104, and also use GDDR5X.

All the mentions of a GP102 come from one forum thread on ChipHell, which also claims the 1060 will run GP104. As far as rumours go it’s pretty weak. Not saying it’s wrong but you gotta take that kind of thing with a grain of salt right now. We need more release info. The ratio is an interesting theory but the GK110 had a 3:1 ratio in hardware and still appeared in the 780Ti. Maxwell isn’t a good comparison because even the M40 had a 32:1 ratio, it was targeted at single precision from the outset.

It’s certainly possible they’ll make a middle range chip but it would be unique to this generation if so – the 780Ti ran the same GK110 chip as the Kepler series Teslas and the 980Ti used the same GM200 chip as the Maxwell series Teslas.

Well I know where my tax return is going.

I’m curious to know if those benchmarks were done when the gpu was over clocked or was it running stock? I thought the 4k numbers would be better than mid 40s but with a gsync monitor it should still looks silky smooth. At the end of the day it all depends on the price we’ll have to pay for it…

Just as an FYI to everyone: we’ll also have an Australian review of the GTX 1080 up tomorrow, which will have more in-depth details for those looking for a more technical analysis. I’m also hoping to get in third-party cards over the coming weeks for even more comparison, so stay tuned!

Thanks Alex. Any word on a 1070 review embargo date?

There’s a media briefing happening right now, so maybe some 1070 details will come out of that. I’m not able to attend, mind you, although I will be going to Computex this year so one way or another I’ll have some info.

If you do a video review with Mark narrating I’ll dance at your wedding, Alex.

When you’re finished your review, you should just feel inclined to disconnect it, wrap it up nicely and post it to once of your friendly Kotaku forum folks. My address available on request. 🙂

If it’s the same card Campbell Simpson mentioned recently, I already called dibs 😉

Dammit! 😛

I’ll stick with my 980ti SLI setup for the moment. Maybe when the 1080ti is announced I’ll upgrade.

I’d say you’ll be safe with that setup till atleast next generation of nvidia cards haha

I was surprised at how power efficient this card is. Boggles my mind 😛

Waiting on the ASUS DirectCU IV version that has been teased on their twitter.

Due an upgrade at the end of the year…deciding on either a 1080 or sli 1070. Won’t even bother considering it until i see the prices though 🙂

Regardless, my sli gtx770’s are beginning to show their age with a few games 🙁

Obviously this does depend on prices, but I’d go with the single 1080. Rule of thumb is to always go for the most powerful single card you can afford.

Too many issues with SLI on a lot of games nowadays.

If you’re looking at the end of the year, wait for the 1080Ti and make your decision then. I initially expected it late this year but the 1080 launched a month later than I thought so the Ti is probably January-February.